Technical SEO focuses on the crawling, indexing and rendering of a website for search engines, to make it as easy as possible for the search engines to find, understand and see the value in the site for users.

Technical SEO is a discipline that handles the various, mainly ‘unseen’, elements of a website that can have an impact on organic search performance and the user experience of visitors to your site. These ‘under-the-bonnet’ factors include everything from site architecture and page speed to sitemaps, image optimisation and structured data. It’s definitely not always considered the flashiest part of SEO, but getting things right technically will make a huge difference to your organic visibility potential, and can absolutely transform site performance on many fronts.

In this technical SEO guide, we look at essential audits and tools that can be used to assess your website’s current standing technically and inform your strategy for improvement, finding, addressing and resolving things that are stopping you from reaching your full organic online potential.

Why is technical SEO important?

You can have the best-looking website in the world, containing the most amazing content, but if your site isn’t speaking the same ‘language’ as the search engines, they won’t want to risk sending relevant organic traffic your way. Search results reflect on the engine that lists them, so they want to be sure that the websites they rank well are providing users with highly relevant and valuable information. If they can’t easily crawl, understand and evaluate a website, they aren’t going to reward it with top rankings for relevant search terms.

Technical SEO helps ensure that a website is fully optimised behind-the scenes, using all of the right code and elements to meet search engine best practice in all aspects of the site. If you get all other aspects of your website’s SEO strategy spot-on, but neglect technical SEO, you will be putting significant limitations on the best possible performance you can achieve.

Starter steps for technical SEO

If your website is brand new, or you’ve never done any kind of SEO on it before, and whoever built the site didn’t do this as standard (which would be unusual), you may not already have in place some key elements needed for technical SEO and performance tracking. These include:

Setting up Google Analytics (GA4) (including Tag Manager)

Setting up Google Search Console

Setting up Bing Webmaster Tools

These platforms are necessary for helping to flag and fix technical SEO issues that your website may have and are also essential for tracking organic search performance over time. Once they are set up properly and starting to gather data, you’re better placed to start working on technical SEO for your website.

Using this technical SEO guide

Technical SEO is a discipline that is made up of hundreds of different elements, and some websites will have more straightforward or complex paths to getting all of their ducks into a row, depending on individual circumstances.

Technical SEO isn’t something that is ever finished, because the online space, search engines and technology are always evolving. Things that have been implemented in one way originally might need to change at another point in the future, and the landscape of tech SEO never stops moving.

In light of this, for this technical SEO guide, we have focused on some of the major checks and changes you might need to make in the first weeks and months of starting a SEO project on a website, to help build a strong foundation for ongoing analysis and progress.

1 – Run a technical SEO audit

Tech SEO audits can be done in a few different ways, but generally it’s a matter of running your website through a number of different tools, then analysing the data that they spit out to determine which elements are an issue and limiting your SEO performance.

At No Brainer, every technical SEO audit we do includes more than 120 different checks, so we’ve not included them all in this piece, but have outlined many of the crucial areas in which we often find SEO problems that need to be fixed for our clients.

Some of the most popular third-party tools used for technical SEO audits include:

Crawling tools for technical SEO audits

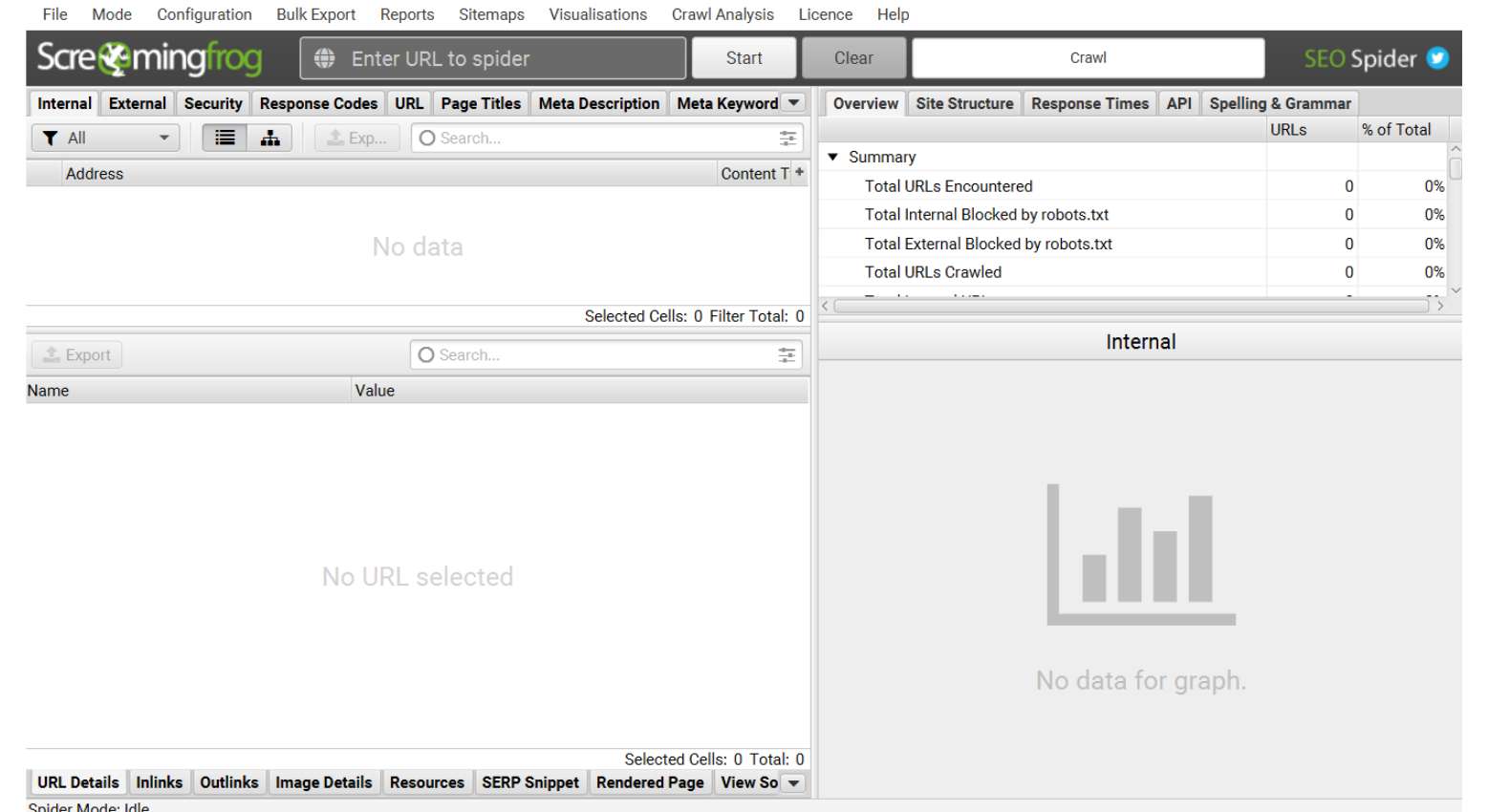

These kinds of tools ‘crawl’ the website (its pages, text, images, videos and any other file types), analysing site structure and flagging technical issues that would affect search engine crawlers. Common crawling tools used in technical SEO audits include:

- Screaming Frog

- Lumar (formerly known as DeepCrawl)

- Sitebulb

- Ahrefs

The size of your website will help determine how long a crawl might take, with smaller websites potentially taking minutes to gather the data, but enterprise websites often taking several hours, or even days, to crawl.

Some crawling tools include integration (via API) with other data sources so that you can match data up more easily in one place. For example, Screaming Frog’s paid version can extract Google Analytics and Google Search Console (GSC) data into your report so you don’t have to access this information separately. You can also access PageSpeed Insights through a Screaming Frog subscription, which gives you data on page speed, another important technical SEO audit component.

Analysing the SEO audit data

Crawl data can be quite daunting, especially if you have a large website. Understanding what it all means for your website and your current technical SEO standing is essential in making a plan to resolve and improve things. Each tool that you use will have its own way of presenting reports and information, but some of the more common reports and checks are likely to include:

Domain and site structure checks

These checks can highlight if there are any issues or risks with the domain, any subdomains or subfolders. The information architecture of the site will also be reviewed, to make sure that the site structure, navigation and URL structure is consistent, logical and SEO-friendly.

Response codes

These can highlight whether a URL on your website is crawlable and indexable by search engines, so will flag things like broken links (404s), missing redirects, server errors, blocked pages (rightly or wrongly blocked by your robots.txt file).

On-page elements

On-page elements for a web page that are missing, duplicate or otherwise don’t meet best practice guidelines can give conflicting signals to search engines. This could be page titles, meta descriptions or H tags. This can also include images that are not correctly optimised in terms of file size, alt text and file name.

Structured data

Also known as schema markup, structured data helps search engines to understand what a page and its content is offering to users. Incorrect implementation, or lack of, structured data can stop a page achieving accurate rankings. More on this later.

Canonicals

Canonical tags are a snippet of code that helps search engines to determine which version of a page is the ‘master’ copy that should be ranked and helps prevent issues when multiple pages have identical content (known as duplicate content). Duplicate content can be an issue on many types of sites but is very common in ecommerce. For example, when filters are used for products, every variation on a product (size, colour etc) could have the same content and a different URL – potentially causing duplicate content issues.

Security

Ensuring that a website is secure is important to search engines, so this check will look at whether the website has the right security protocols in place e.g. https security certificate so transactions can be made securely.

Hreflang

For websites that have an international audience, or is multilingual, having the hreflang html attribute correctly implemented on the site helps to ensure that people searching in a specific country are likely to see the version of the site that matches this in their results. If not done correctly or not used at all, it can mean that users get confusing and irrelevant results for your site when searching.

Robots.txt checks

A website’s robots.txt file is what tells search engines whether there are pages that should and shouldn’t be crawled and indexed. When this file is organised and managed properly, it can have a positive SEO impact.

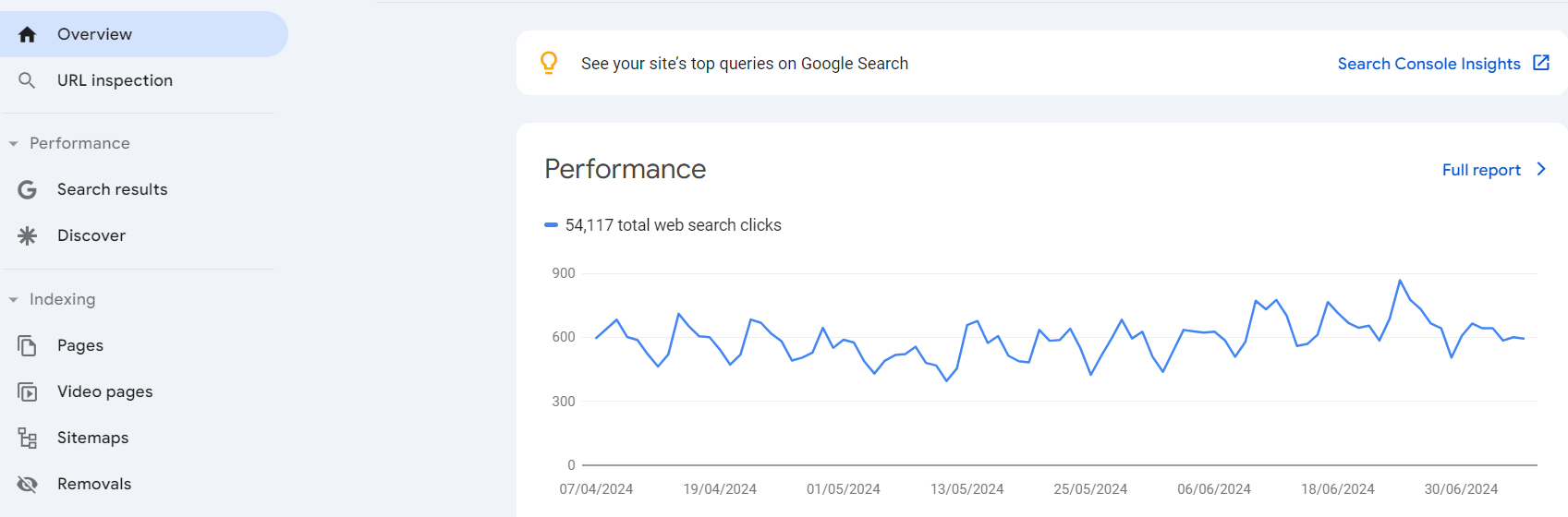

2. Review Google Search Console (GSC) reports

GSC gives you useful information about how Google views your website and can flag issues that could be compromising your current SEO performance. The reports available include:

- Overview – showing a top-line summary of your website’s ‘health’ according to Google, including any manual actions applied or any security issues flagged.

- URL inspection – showing the information that Google has on a specific individual page of your website, rather than the site as a whole.

- Performance: Search results – showing how many users have seen your site in search results, how many have clicked on the result, the search queries they used and the average position in search results you’ve achieved.

- Performance: Discover / Google news – showing the same information as above for your website appearing in Google’s ‘Discover’ and ‘News’ results listings.

- Page indexing – showing the index status of all pages on your website, including pages that can’t be indexed by Google when crawling your site.

- Video page indexing – showing how many pages your site has with videos on and their indexed status.

- Sitemap indexing – Showing any sitemaps that have been submitted for your website and flagging any errors found in them.

- Removals – Showing any pages you have manually blocked from appearing in Google search results.

- Page experience – showing the percentage of pages on your website that Google considers to offer a good page experience to users.

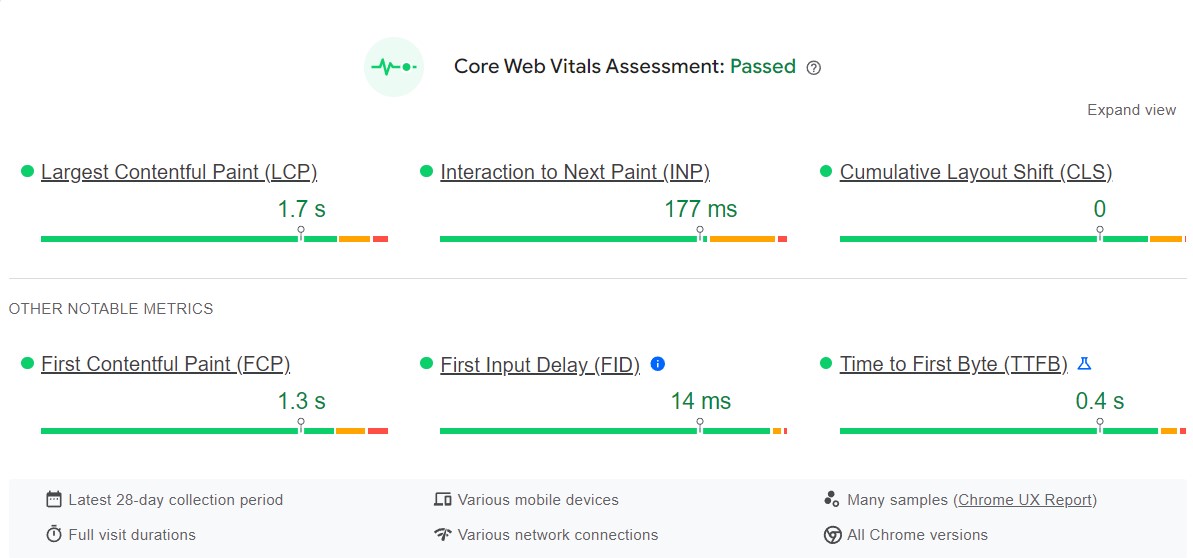

- Core Web Vitals – Showing page performance according to various Google metrics that they have grouped under the label of Core Web Vitals.

You can find a full list of GSC reports and what they all mean here.

It’s important to check these reports regularly and take action, when needed, if errors or manual actions and warnings appear, as they can seriously impact your website’s search performance.

3 – Assess and optimise page speed

According to research, 40% of visitors will abandon a website if a page takes more than three seconds to load. As well as being a user experience and conversion factor, page speed is also one of the many things that can influence search engine rankings.

You can use PageSpeed Insights to check your current page speeds on mobile and desktop. There are many things you can do to improve page load times, including:

- Compressing files – images, CSS, HTML and JavaScript files can all be compressed so that they take up less space and therefore load more quickly.

- Remove or compress any code bloat – messy code, often introduced to your website when it’s built or by using various plugins and tools, can slow down page loading.

- Reduce redirects – every time a page on your site redirects to another one, it takes longer for the end page to load. If there are several redirects for a URL, this makes the problem worse. Assessing your redirects and simplifying them if possible can help with page load times.

4- Identify thin and duplicate content

There are many reasons why a website can have duplicate content issues. For example, ecommerce websites often have many variations of a single product, each on their own page (different colours, sizes etc) and if not handled properly from a technical SEO point of view, these pages can be seen by search engines as all having the same content, which is a negative ranking factor.

Other websites may have a significant amount of ‘boiler plate’ content that appears on all pages of a certain type. Again, this could be considered as duplicate content by search engines.

Popular tools that crawl your website and identify duplicate content issues include:

Thin content is another potential issue, where a web page contains content that lacks depth or usefulness to visitors. It could be a page that is mainly ads or for affiliate purposes, uses poor AI content or even steals content from another website or simply just doesn’t have much in the way of meaningful words on the page.

There are many tools that can identify thin content as part of an audit process, including:

- Semrush

- Screaming Frog – sorting pages by word count

Rectifying duplicate and thin content can be quite a big task, depending on the size of your website and the extent of the problem, but you can prioritise the areas of the site that mean the most to your bottom line to tackle first.

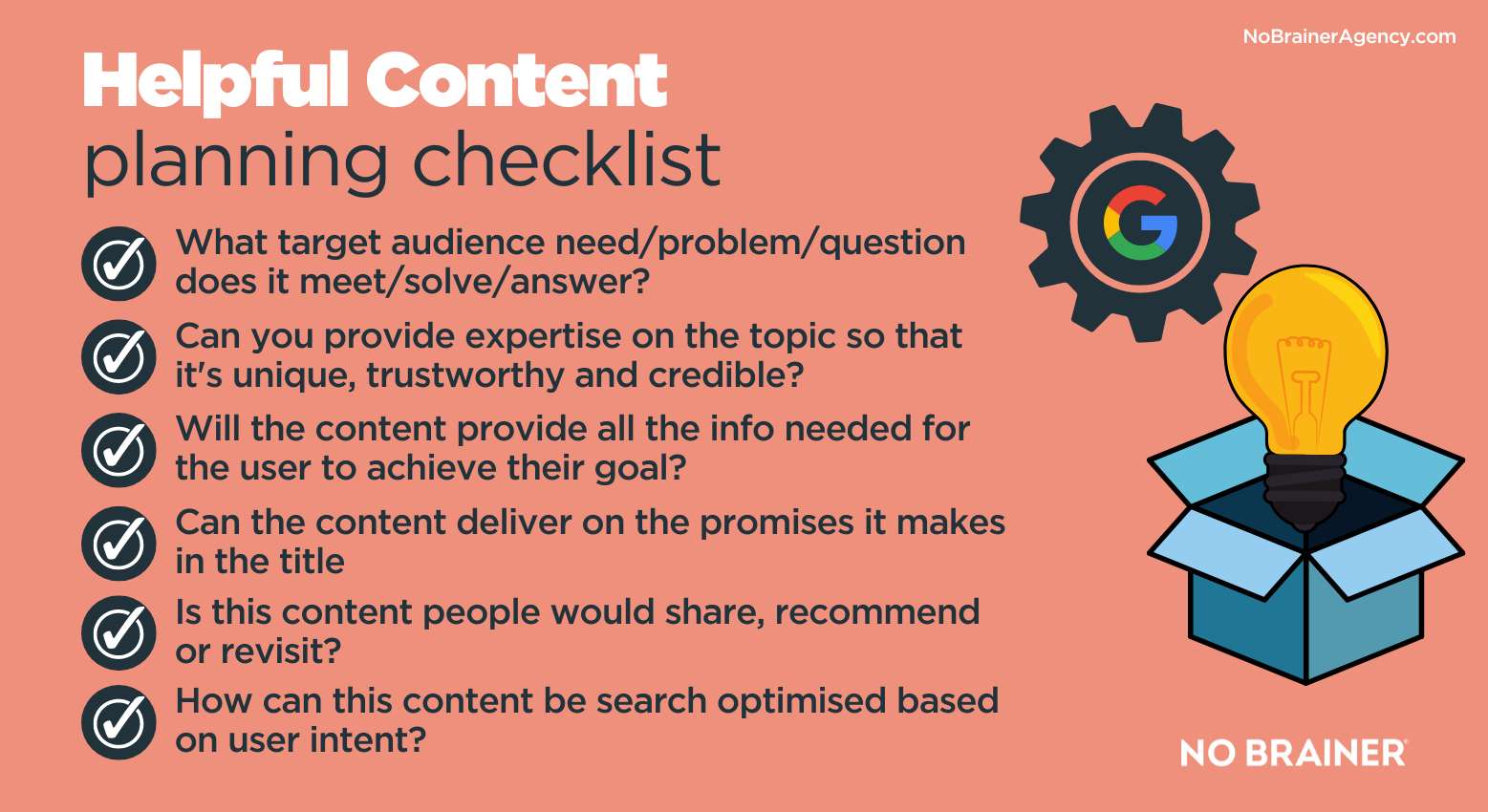

The content you replace it with will need to align with Google E-E-A-T principles to be as effective as possible. Following our tips for creating helpful content can give you a great starting point.

5 – Check for Structured Data

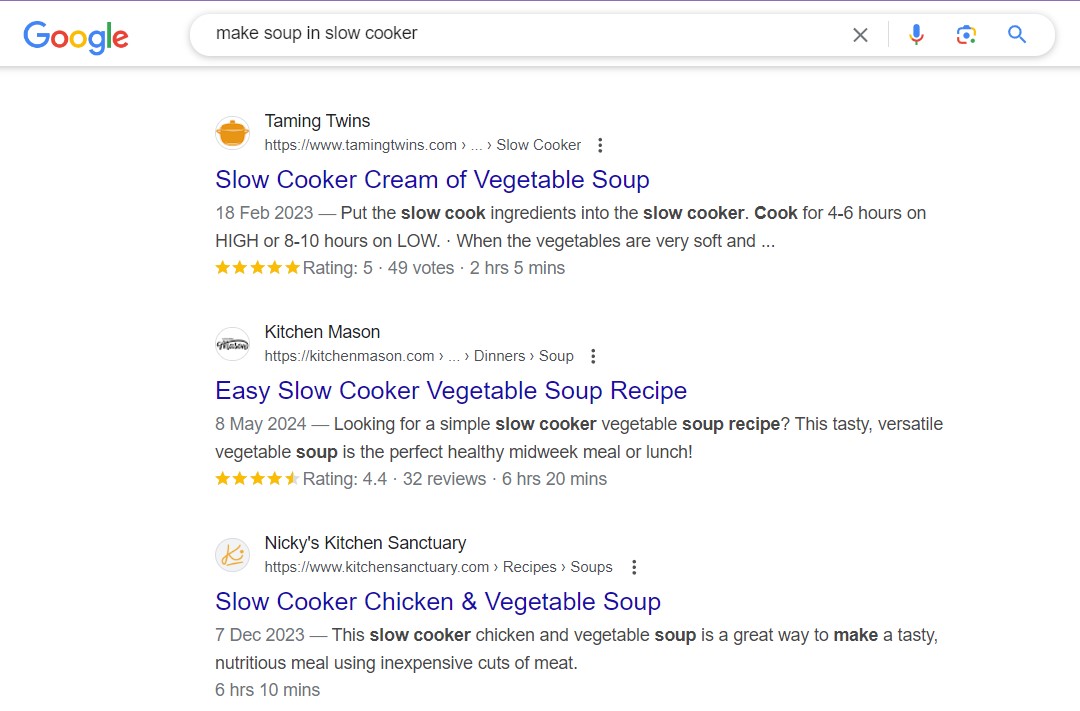

While the presence (or absence) of schema (often called Structured Data) in the code of a website isn’t a direct ranking factor for organic search, correct implementation can mean that pages appear in search results with ‘rich snippets’ or ‘rich results’. This essentially is extra information that appears in a search result which can result in extra clicks. It can be anything from seeing review ratings in the SERPs, to seeing product price or stock information, recipe details for food searches, event details or more.

You can use Google’s schema tools to check whether pages on your website already have in place and working properly. If not, you can fix the issues to make sure that you’re giving your pages the best chance of receiving more clicks when they appear in search results.

View Google’s list of currently supported structured data.

6 – Check for cannibalisation

Cannibalisation is the rather dramatic way of referring to pages within your own website that compete with each other for the same keywords and serve roughly the same purpose for your audience and their intent, at least as far as search engines are concerned. This can mean that competing pages all harm each other’s rankings because it’s not clear to the search engines which page they should serve in results for relevant queries.

You can check for cannibalisation using any tool that tracks your website rankings on its various pages, such as:

A more manual (but free) route to checking for keywords that rank on several pages is to use GSC. You can look at the search results report and then filter by a specific query to see all the pages that are appearing in search results for that term and their average position. If there is more than one page showing, there is some level of cannibalisation involved and you can make a plan to resolve this.

7 – Review internal linking

The way that your website pages link to each other send signals to search engines about what keywords are most relevant to that page and how themed content relates together, amongst other things.

If your website is full of link anchor text like ‘click here’, rather than utilising anchor text strategically, then you could be missing out from an SEO point of view. Orphan pages, which are not linked to by other pages on your site, can also be detrimental for SEO performance because they can’t be crawled and indexed as effectively as pages in a good site structure.

Reviewing your internal links will help to flag any orphan pages and highlight any links that could be better optimised for maximum SEO impact.

Semrush and Ahrefs both have useful tools that can help you review and fix your internal links efficiently.

Our technical checklist isn’t completely exhaustive; but we think it covers the main foundations of a solidly search-friendly website. If you would like to chat about how we can help you business with technical SEO or any other part of your SEO strategy, we’d love to hear from you. Use the form on this page to get in touch.